How It Works

Functional tests are woven into the migration lifecycle — they are not a separate step you run after the fact. Here is how they fit in:Roadmap Generation

The roadmap is established, defining the ordered set of milestones for your migration.

Test Generation

Morph dispatches testing agents for each milestone. Backend testing agents explore the source code, run the application, and record expected behavior for APIs and CLIs. Frontend testing agents navigate the application in a browser, capture screenshots at each step, and optionally record video. This ensures tests capture production-realistic details — bootstrap sequences, authentication, headers, visual layout, and more.

Milestone Execution

During milestone execution, Morph agents run your migrated code and actively verify that functional tests pass as part of the implementation loop. Results appear in each milestone’s test summary and in the project-level Functional Testing dashboard.

What Makes These Tests Different

| Aspect | Traditional test suites | Morph functional tests |

|---|---|---|

| Authoring | Written manually by engineers | Auto-generated by agents exploring source code |

| Basis | Specification or developer assumptions | Observed real behavior of the running application |

| Scope | Varies by team discipline | Systematically covers every discovered entry point |

| Comparison | Pass/fail against assertions | Side-by-side origin vs. target response comparison |

| Visual | Not covered | Browser-based screenshot and video capture |

Supported Application Types

Morph functional testing covers three categories:| Type | Entry point | Input | Output compared |

|---|---|---|---|

| API | Endpoint (e.g., /add, /health) | HTTP method, headers, request body | Status code + response body |

| CLI | Command or subcommand (e.g., calc add) | Arguments, flags, stdin | Exit code + stdout/stderr |

| UI | Page or user flow | Browser interactions (clicks, input) | Screenshots at each step, optional video recording |

The Functional Testing Dashboard

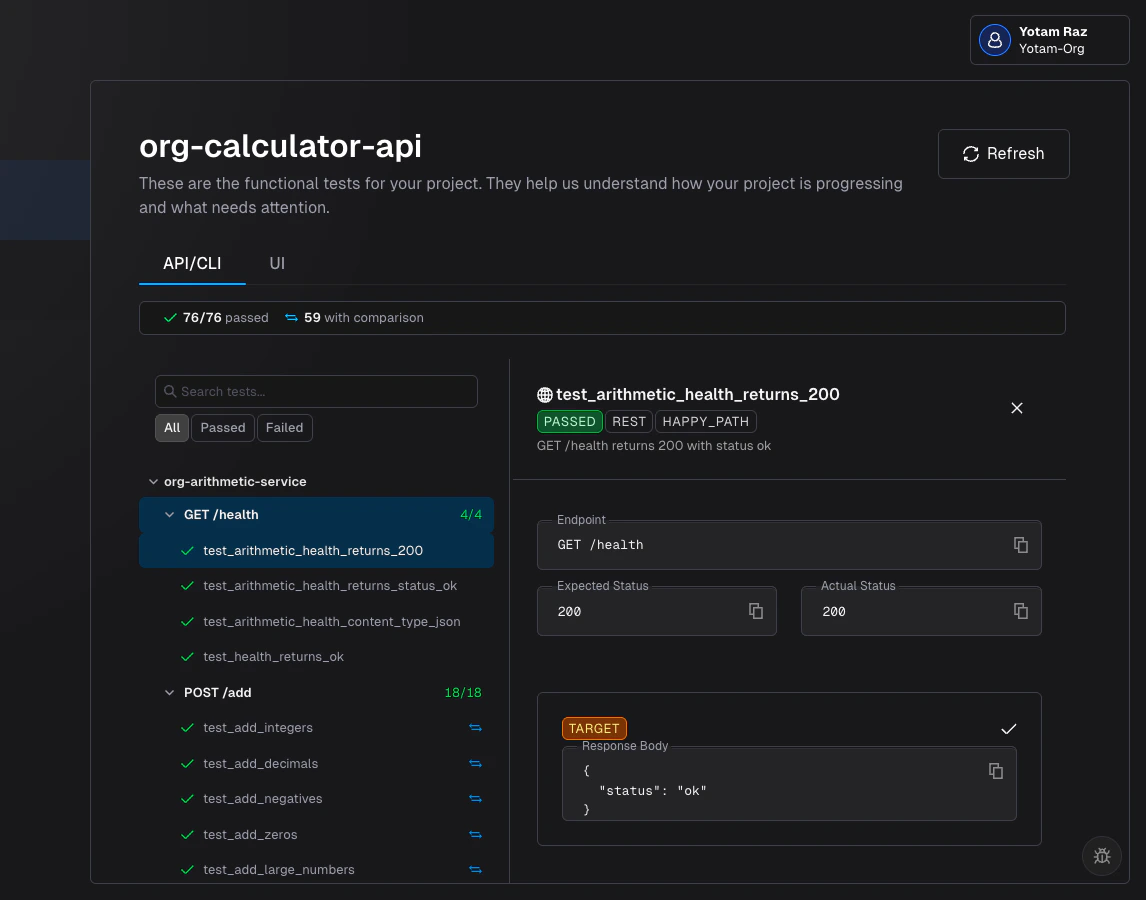

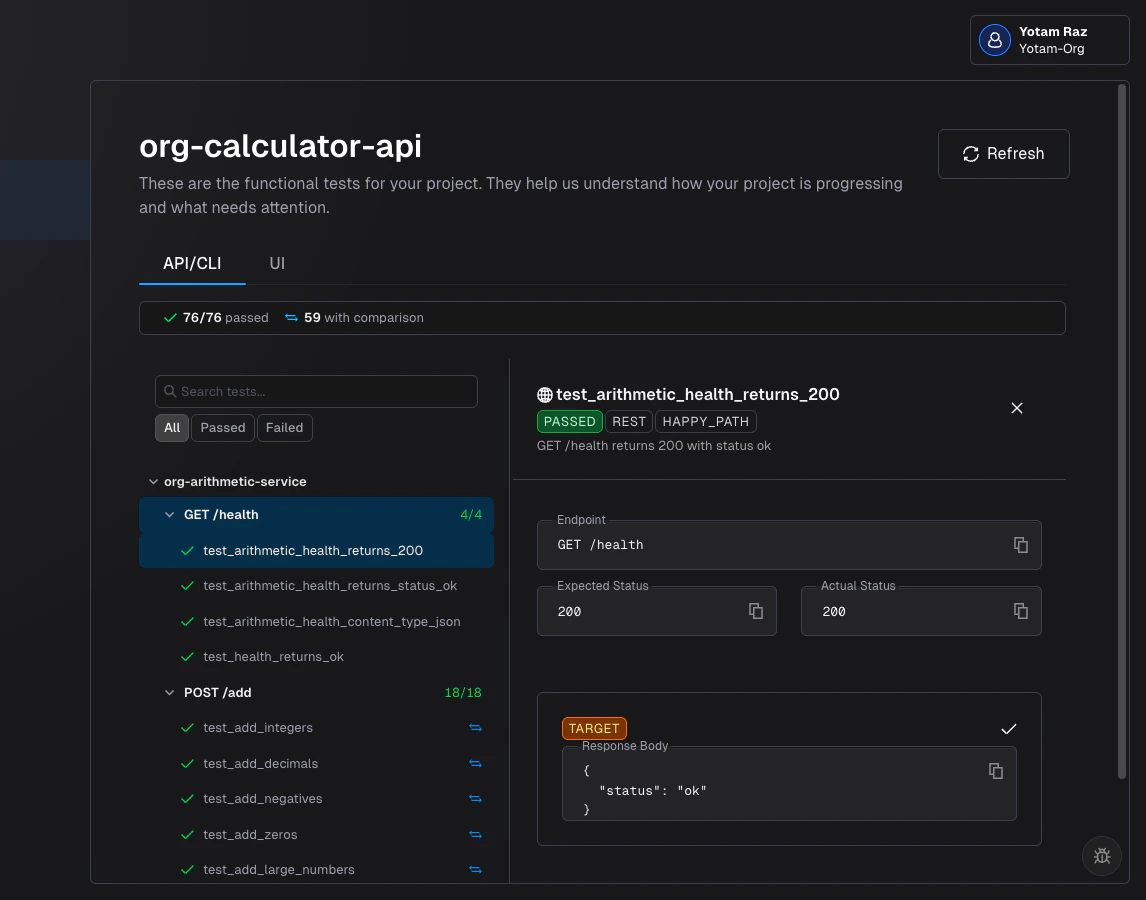

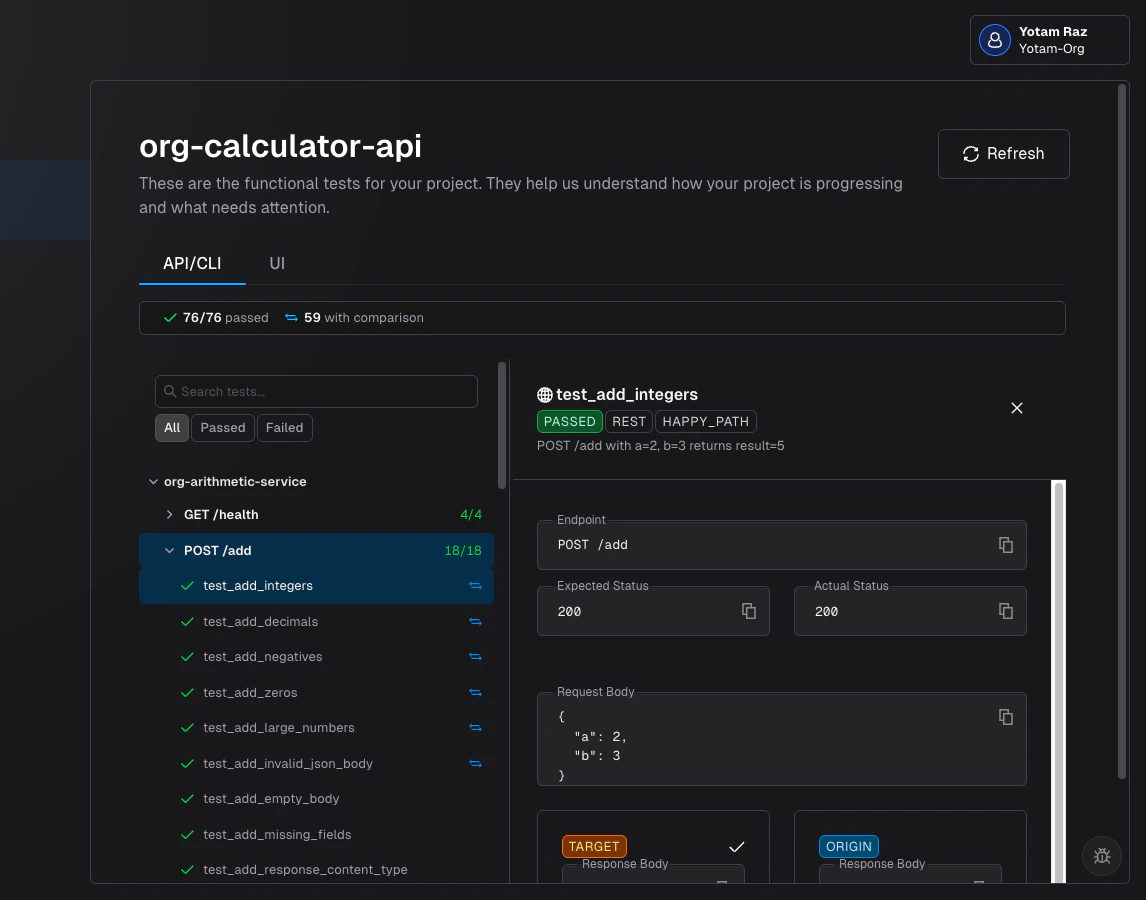

The project-level dashboard is accessible from the sidebar. It organizes all test results across two tabs: API/CLI for backend tests and UI for frontend visual tests. If the project only has one type of test, only that tab is shown.

API/CLI Tab

The API/CLI tab shows backend functional test results — API endpoint responses and CLI command outputs compared between origin and target.Summary Bar

At the top, a summary bar provides an at-a-glance view:- Passed/Total — How many tests pass out of the total (e.g.,

12/15 passed) - Failed — Count of failing tests (shown only when failures exist)

- With comparison — How many tests have origin data available for side-by-side comparison

- Avg duration — Average test execution time

Sidebar Tree

The left sidebar organizes tests in a hierarchy:- Repository — Each repo in the project is a collapsible group

- Entity — Within each repo, tests are grouped by entry point:

- For APIs:

GET /health,POST /add, etc. - For CLIs:

$ calc add,$ calc health, etc.

- For APIs:

- Individual tests — Each test case under its entity, showing pass/fail status, duration, and a comparison icon if origin data is available

Detail Panel

Selecting a test opens the detail panel on the right. The content adapts to the protocol type: For API tests:- Endpoint — HTTP method and path (e.g.,

POST /add) - Expected vs Actual Status — Status codes with mismatch highlighting

- Request Body — The payload sent to both origin and target

- Target Response — Status code and response body from the migrated application

- Origin Response — Response body from the original application (when comparison data is available)

- Match indicators — Response Match / Response Mismatch tags with an optional diff view

- Command — The full command invocation (e.g.,

$ calc add 2 3) - Expected vs Actual Exit Code — With mismatch highlighting

- Target stdout/stderr — Output from the migrated application

- Origin output — Output from the original application (when comparison data is available)

- Match indicators — Output Match / Output Mismatch tags with diff view

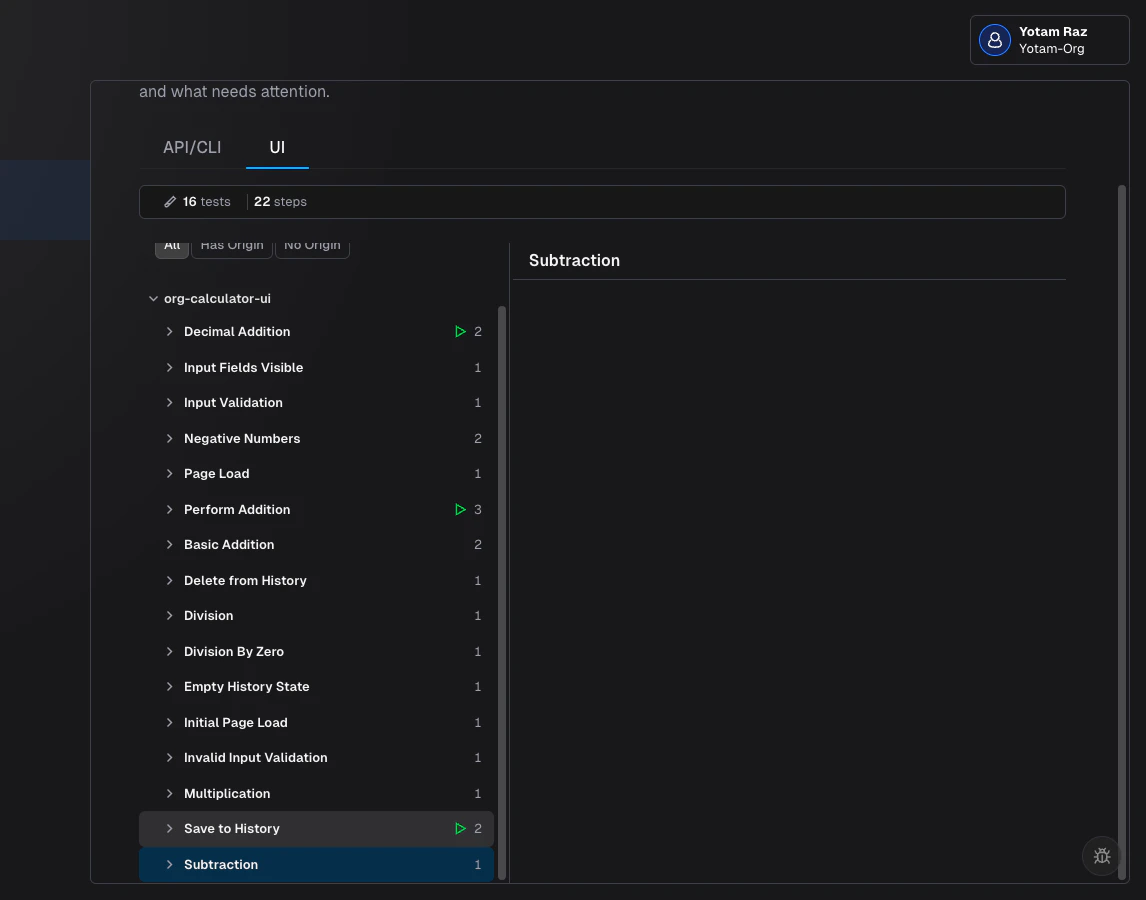

UI Tab

The UI tab shows frontend visual test results — browser screenshots and recordings captured from the target application, with optional side-by-side comparison against the origin.Summary Bar

- Tests — Total number of UI test flows

- Steps — Total screenshot steps across all tests

- With origin comparison — How many tests have origin screenshots for side-by-side comparison

Sidebar Tree

Tests are organized by repository, then by test name. Each test entry shows:- Step count — Number of screenshot steps

- Recording icon — Indicates a video recording is available

- Origin badge — Shows how many steps have origin screenshots (e.g.,

3/5 steps have origin)

Step Viewer

Selecting a test step opens the viewer on the right:- Step navigation — Arrow buttons or keyboard arrows to move between steps

- Step label — Description of what the step captures (e.g., “Click login button”)

- Screenshot comparison — When the test has origin data, screenshots are displayed side by side (Origin on the left, Target on the right). Click to zoom.

- Recording mode — Toggle between Steps (screenshot-by-step) and Recording (full video playback) when a recording exists

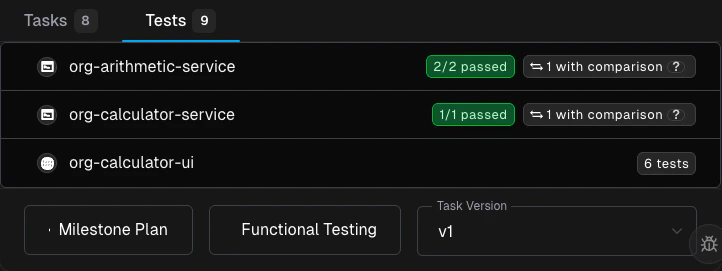

Milestone Test Summary

Each milestone card on the roadmap displays a test summary after execution completes. The summary shows per-repo results:- Backend tests — Repository name with passed/total count and a comparison indicator

- Frontend tests — Repository name with total test count and origin comparison count

Tips

- Review tests early. As soon as tests are generated, skim them in the milestone test summary. If an entry point is missing, it may indicate the agent couldn’t reach it — check your lifecycle setup configuration.

- Use the dashboard for stakeholder updates. The passed/total ratio and origin comparison counts are intuitive, non-technical measures of migration completeness.

- Combine with rules. If tests reveal a recurring pattern (e.g., missing headers, wrong status codes), create a Rule so Morph handles it in future milestones.

- Filter to failures. On the API/CLI tab, use the Failed filter to focus your review on the tests that need attention.

Related Docs

Milestones

The milestone lifecycle, statuses, and task dependencies

Roadmap

High-level view of your migration plan

Reviewing Pull Requests

Best practices for reviewing milestone PRs

Lifecycle Setup

Configure how your project builds and runs